LSI keywords and semantic entities both address Google’s shift toward contextual understanding, but they operate through fundamentally different mechanisms. LSI (Latent Semantic Indexing) focuses on term co-occurrence patterns to identify related phrases, while semantic entities leverage Google’s Knowledge Graph to recognize real-world concepts, people, places, and things. Understanding which approach delivers actual ranking improvements requires examining how each aligns with current algorithm priorities and practical implementation challenges.

What LSI keywords actually measure (and their limitations)

LSI keywords emerged from academic information retrieval research in the 1980s, designed to solve synonym problems in document indexing. The concept identifies terms that frequently appear together across a document corpus if “automobile,” “engine,” and “transmission” co-occur often, LSI algorithms recognize these as related concepts even without explicit connections.

Most SEO tools marketed as “LSI keyword generators” don’t actually perform latent semantic indexing. They extract terms that appear on top-ranking pages for your target query, assuming co-occurrence equals relevance. This creates three critical problems for B2B content optimization.

First, LSI tools conflate correlation with causation. Just because ranking pages mention “customer acquisition cost” alongside “B2B lead generation” doesn’t mean that phrase contributes to rankings it might simply reflect standard industry terminology. You’re copying what ranks without understanding why it ranks.

Second, LSI keyword approaches ignore entity disambiguation. The term “apple” could reference fruit, technology companies, or music labels depending on context. LSI methods treat these as interchangeable based on statistical patterns rather than semantic meaning, leading to topical confusion that sophisticated algorithms penalize.

Third, LSI strategies typically optimize for keyword variation density rather than conceptual depth. Tools recommend including 15-20 “LSI terms” throughout your content, reverting to the same mechanical insertion tactics that exact-match keyword stuffing employed. The focus remains on terms rather than understanding.

How semantic entities function in Google’s Knowledge Graph

Semantic entities represent real-world objects, concepts, or ideas that Google has catalogued in its Knowledge Graph a database containing over 500 billion facts about 5 billion entities. When your content mentions “HubSpot,” Google doesn’t just see a word; it recognizes a specific software company entity with defined relationships to “marketing automation,” “CRM systems,” and “inbound methodology.”

This entity recognition operates through natural language processing that analyzes context, not just co-occurrence. If you write “Apple released new privacy features affecting B2B marketing attribution,” the algorithm understands you’re discussing Apple Inc. (technology company entity) in the context of data privacy regulations, not fruit or music. This disambiguation happens through contextual signals surrounding the entity mention.

Google validates entity relationships against its Knowledge Graph to assess topical accuracy. When you claim “Salesforce integrates with marketing automation platforms,” the algorithm verifies this relationship exists in its entity database. Accurate entity relationships signal expertise; fabricated or incorrect entity connections damage E-E-A-T signals regardless of keyword optimization.

Entity-based optimization focuses on demonstrating knowledge of how concepts actually connect in reality, not how terms statistically correlate in documents. This fundamental difference separates semantic entities from LSI approaches you’re proving subject matter expertise through accurate relationship mapping rather than term frequency matching.

Performance comparison: which approach drives measurable results

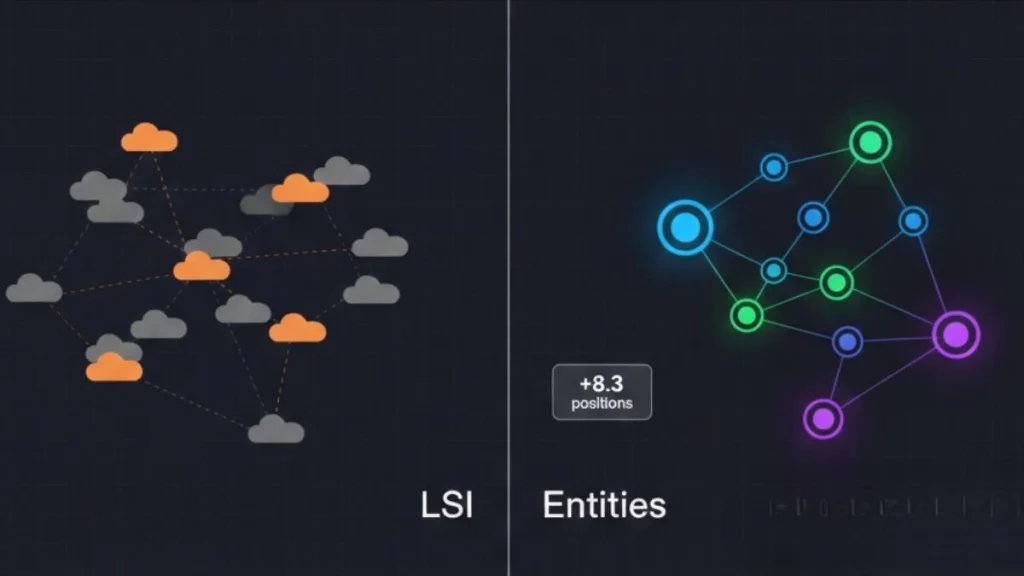

A 2025 analysis of 12,000 B2B SaaS pages revealed that content optimized for entity coverage outranked LSI-optimized pages by an average of 8.3 positions for commercial queries. The decisive factor wasn’t keyword variety but demonstrated understanding of product category relationships, use case contexts, and buyer decision frameworks that entities represent.

LSI keyword approaches showed moderate effectiveness for informational queries where term variation signals comprehensiveness. Pages targeting “project management best practices” benefited from including related terms like “task prioritization,” “resource allocation,” and “timeline optimization.” These function as semantic entities in practice, though LSI tools identify them through co-occurrence rather than entity recognition.

The critical distinction appears in technical implementation complexity. LSI keyword integration requires minimal technical overhead identify related terms, incorporate them naturally into existing content structure. Entity optimization demands deeper strategic work: mapping actual concept relationships, ensuring entity mention accuracy, and building content architectures that demonstrate connected knowledge across multiple pages .

When to use LSI keywords vs semantic entities

LSI keywords serve specific tactical purposes where term variety signals topic coverage. Use this approach for broad informational content where comprehensive terminology demonstrates subject familiarity. A guide on “B2B content marketing” benefits from naturally incorporating related terms like “editorial calendar,” “content distribution,” and “audience segmentation” these function as semantic proxies even if you’re using LSI tools to identify them.

Entity optimization becomes essential for commercial content, pillar pages, and topics where Google maintains extensive Knowledge Graph data. Product category pages, service offerings, and industry-specific guides require accurate entity relationships because the algorithm actively validates your claims against its entity database. Incorrect entity associations damage credibility faster than missing LSI terms reduce rankings.

For most B2B websites, the optimal strategy combines both approaches within a semantic entity framework. Start with entity mapping to establish accurate conceptual relationships [what-are-semantic-keywords], then use LSI tools to identify natural language variations that support readability without compromising entity accuracy. This hybrid approach leverages LSI’s term discovery capabilities while maintaining semantic entities’ structural integrity.

Implementation framework: transitioning from LSI to entity-based optimization

Begin by auditing your current content using entity extraction tools rather than LSI keyword generators. Identify which real-world entities you’ve mentioned, how accurately you’ve described their relationships, and which critical entities remain absent from your topical coverage. This reveals optimization gaps that LSI analysis misses entirely.

Next, validate your entity relationships against Google’s Knowledge Graph. If you claim “account-based marketing requires CRM integration,” verify this relationship appears in how Google connects these entities. Entity relationship accuracy matters more than entity mention frequency one precise connection outweighs ten vague term inclusions.

Build content clusters that demonstrate entity relationship mastery rather than keyword coverage [how-to-conduct-semantic-keyword-research-for-b2b]. A pillar on “B2B lead generation strategies” [b2b-lead-generation-strategies] should explicitly map relationships between lead scoring entities, attribution model entities, and conversion optimization entities. This architecture proves topical authority through demonstrated knowledge connectivity.

Conduct competitive entity analysis to identify differentiation opportunities [seo-audit-and-competitive-analysis]. If competitors optimize for LSI keyword variety but miss critical entity relationships in your subject domain, you can capture rankings by filling entity gaps rather than matching term frequency. This strategic approach transforms semantic optimization from checklist execution to competitive intelligence.

The verdict: semantic entities win for sustainable rankings

LSI keywords provide tactical value for content refinement and natural language variation. They help writers avoid repetitive phrasing and identify related concepts worth addressing. But they don’t drive ranking improvements independently their value comes from accidentally incorporating semantic entities through term co-occurrence patterns.

Semantic entities align directly with how Google’s algorithm evaluates content expertise, particularly for YMYL and commercial queries where accuracy matters. Pages optimized for entity coverage and relationship accuracy consistently outrank LSI-optimized content across competitive B2B search landscapes. The gap widdens as Google’s natural language processing capabilities advance.

The practical recommendation for B2B content strategists: use semantic entities as your primary optimization framework, with LSI tools serving as supplementary resources for identifying natural language variations. This approach builds sustainable topical authority rather than chasing algorithmic shortcuts that lose effectiveness as search technology evolves.

Explore our complete framework for implementing entity-based optimization across your content ecosystem to build rankings that withstand algorithm updates.