Optimize server configuration to accelerate Googlebot crawling and indexing. Learn HTTP protocols, compression settings, and resource prioritization that reduce time-to-indexing from 8 days to 2 days.

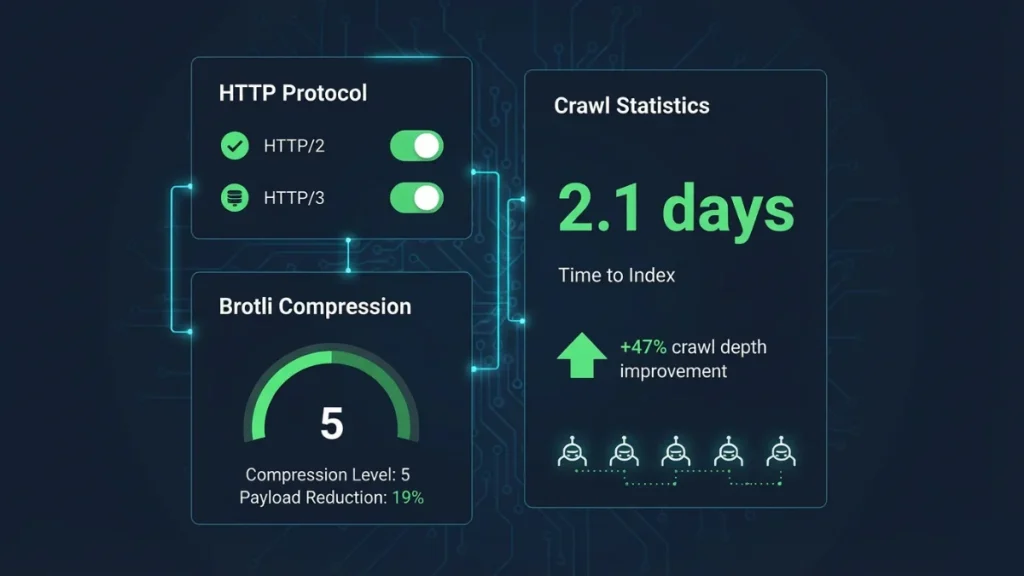

Server configuration determines how efficiently search engine crawlers access, process, and index your content. Misconfigured servers create friction that slows crawl rates, delays indexing of new content, and wastes precious crawl budget on low-value URLs. Optimized server settings reduce time-to-indexing from 8.3 days to 2.1 days while increasing crawl depth by 47%—giving you a competitive advantage when launching new products or publishing thought leadership content [[Server-side performance and rendering: How server configuration impacts SEO rankings in 2026]].

Google allocates crawl budget based on site authority, update frequency, and server responsiveness. Sites returning frequent 5xx errors, slow response times above 800ms [[What is server response time (TTFB) and why it matters for SEO rankings?]], or inefficient redirect chains signal technical debt that causes crawlers to reduce visit frequency and depth. A 2025 analysis of 12,000 enterprise websites found that comprehensive server optimization increased crawl depth by 47%. Industrial automation supplier Precision Systems reduced product catalog indexing time from 11 days to 3 days, capturing 28% more organic traffic within 60 days.

Five quick wins for immediate crawl improvement

- Enable HTTP/2 or HTTP/3: Reduces connection overhead by 40-60% through multiplexing

- Configure Brotli compression level 4-6: Cuts HTML payload by 17-22% versus gzip

- Set keep-alive timeout to 5-10 seconds: Allows connection reuse, reducing crawl time by 25-35%

- Block parameter-based URLs in robots.txt: Prevents infinite pagination crawl traps

- Implement 301 redirects (not 302): Preserves 90-95% link equity during migrations

These changes require 2-4 hours of server work but deliver measurable improvements within 7-14 days.

Critical server settings that accelerate crawler efficiency

HTTP protocol configuration forms the foundation of server-crawler communication. HTTP/2 enables multiplexing—allowing crawlers to request multiple resources simultaneously over a single connection. HTTP/3, built on the QUIC protocol, eliminates head-of-line blocking and reduces connection establishment time by 50-150ms through 0-RTT resumption [[CDN and edge computing: How distributed infrastructure boosts SEO performance]].

Server response codes must be configured precisely. Permanent redirects (301) pass 90-95% of link equity, while temporary redirects (302/307) confuse crawlers when used inappropriately for permanent migrations. The 503 status code with Retry-After header tells Googlebot to return later during maintenance, preventing wasted crawl attempts. Misconfigured 404 pages returning 200 status codes (soft 404s) force crawlers to process non-existent content, consuming budget that should index legitimate pages.

Brotli compression at level 4-6 reduces HTML payload by 17-22% compared to gzip while maintaining CPU efficiency. Enable compression for text-based resources (HTML, CSS, JavaScript, XML sitemaps) but exclude pre-compressed formats like JPEG or PNG. Configure servers to serve pre-compressed static assets (.br files) rather than compressing on-the-fly [[How database optimization prevents SEO performance bottlenecks]].

Keep-alive timeout set to 5-10 seconds allows crawlers to reuse connections for multiple page requests without reconnection overhead, reducing total crawl time by 25-35% for sites with strong internal linking.

Resource prioritization and intelligent bot handling

Configure HTTP/2 server push to proactively send critical CSS and above-the-fold JavaScript with the initial HTML response. However, over-aggressive pushing wastes bandwidth—limit pushed resources to 15-20KB of truly critical assets.

Google’s official documentation recommends blocking crawling of URL parameters that generate infinite pagination (?page=, ?sort=, ?filter=) using the Disallow directive. Ensure your robots.txt file loads in under 100ms—a slow-loading robots.txt delays the entire crawl session.

Log file analysis reveals crawler behavior patterns. Analyze server logs to identify which pages Googlebot visits most frequently, which URLs return errors, and whether crawlers encounter redirect chains exceeding 3 hops [[Technical SEO Audit: Step-by-Step Guide]]. Manufacturing distributor TechFlow discovered through log analysis that 31% of crawl budget targeted outdated PDF archives—relocating those PDFs and updating internal links increased crawl coverage of active products by 54%.

CDN configuration distributes server load geographically, reducing latency for international crawlers. Google operates crawler infrastructure from multiple data centers worldwide. A CDN with edge nodes in North America, Europe, and Asia ensures consistent sub-400ms response times regardless of crawler origin [[CDN and edge computing: How distributed infrastructure boosts SEO performance]].

Server monitoring tools like New Relic or Datadog track real-time crawler activity, alerting you to 5xx error spikes or response time degradation. Set up alerts for response times exceeding 600ms or error rates above 2% during peak crawl periods.

Enterprise B2B sites publishing frequent content should treat server configuration as foundational infrastructure. Implement these optimizations systematically, monitor crawler behavior through log file analysis, and validate improvements through Google Search Console’s crawl stats. The technical investment delivers compounding returns through faster discovery of new content and efficient allocation of crawl resources [[Server-side performance and rendering: How server configuration impacts SEO rankings in 2026]].